Deadlock-free LLM agent coordination

ZipperGen is a Python DSL and runtime for structured multi-agent LLM coordination. You write a single global protocol describing what messages flow between which agents and who owns each decision. ZipperGen projects it onto each agent and runs them concurrently, with deadlock-freedom guaranteed by construction. The guarantees are formally established in our paper.

Quick start

git clone https://github.com/zippergen-io/zippergen.git

cd zippergen

pip install -e .Python 3.11 or later required.

Hello, World!

Three agents collaborate: Writer drafts a tweet, Editor decides

whether it's good enough, and ZipperGen handles the coordination. No API key needed; the

built-in mock backend runs instantly.

from zippergen.syntax import Lifeline, Var

from zippergen.actions import llm

from zippergen.builder import workflow

User = Lifeline("User")

Writer = Lifeline("Writer")

Editor = Lifeline("Editor")

topic = Var("topic", str)

tweet = Var("tweet", str)

approved = Var("approved", bool)

@llm(system="Write a one-sentence tweet about the topic.",

user="{topic}", parse="text", outputs=(("tweet", str),))

def draft(topic: str) -> None: ...

@llm(system="Is this tweet engaging, original, and under 180 chars? Reply true or false.",

user="{tweet}", parse="bool", outputs=(("approved", bool),))

def approve(tweet: str) -> None: ...

@llm(system="Improve this tweet: shorter and punchier.",

user="{tweet}", parse="text", outputs=(("tweet", str),))

def revise(tweet: str) -> None: ...

@workflow

def write_tweet(topic: str @ User) -> str:

User(topic) >> Writer(topic)

Writer: tweet = draft(topic)

Writer(tweet) >> Editor(tweet)

Editor: approved = approve(tweet)

if approved @ Editor:

Editor(tweet) >> User(tweet)

else:

Editor(tweet) >> Writer(tweet)

Writer: tweet = revise(tweet)

Writer(tweet) >> User(tweet)

return tweet @ User

# No API key needed; runs with the built-in mock backend.

# Switch to a real LLM: write_tweet.configure(llms="openai")

result = write_tweet(topic="a git commit message that tells the truth")

print(result)Using real LLMs

Set your API key, pass a provider name, and run:

export OPENAI_API_KEY=...my_workflow.configure(llms="openai", ui=True)

result = my_workflow(notes="...", diagnosis="...")

Built-in providers: openai, mistral, claude.

Different agents can use different providers:

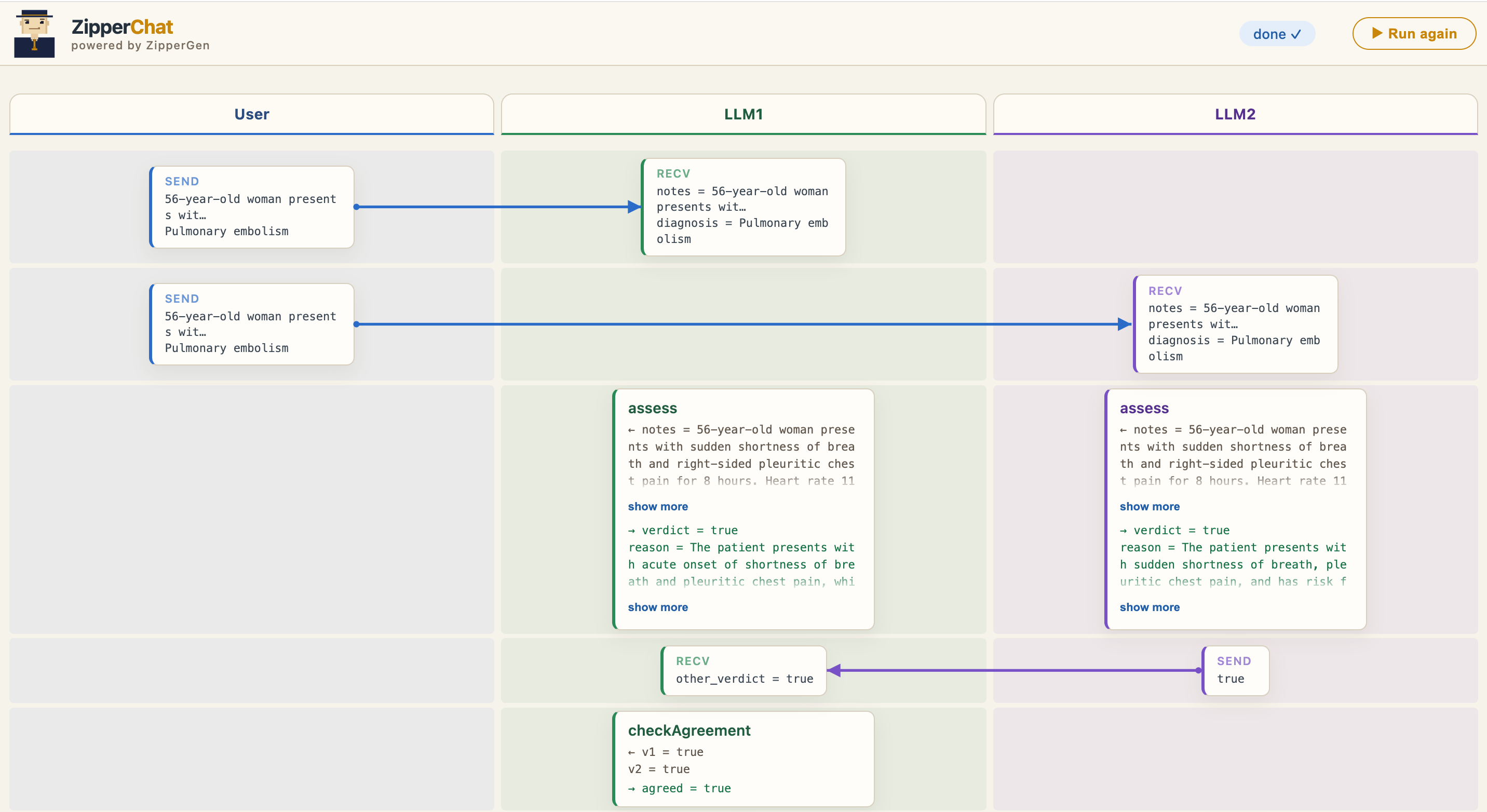

my_workflow.configure(llms={"LLM1": "mistral", "LLM2": "openai"})ZipperChat

Pass ui=True to open a live message-sequence-chart in your browser as the

agents run. Watch who sends what, who is thinking, and where the workflow currently is.

Formal foundation

ZipperGen is grounded in the theory of Message Sequence Charts. The projection from a global protocol to per-agent local programs is syntax-directed, and deadlock-freedom follows by structural induction; no runtime checking required. The core theorems have been machine-checked in Lean 4:

Bollig, Függer, Nowak. Provable Coordination for LLM Agents via Message Sequence Charts. arXiv:2604.17612 [cs.PL]